3D display by Integral Imaging

|

As well known, along the past decades the idea of projecting 3D movies has died and resurrected periodically. This time, this idea seems to be here for a long stay. The proof is the current competition in the development of 3D TVs or 3D monitors. Note, however, that it is not very probable that people accept the need of using special goggles every day at the home living room. For this reason, we believe that the next generation of 3D TVs are going to be auto-stereoscopic. Within the group of auto-stereoscopic displays, the most glamorous and fascinating are holographic displays. However, at present there are still some serious problems with this technique: The need for coherent illumination for pickup and display stages, and the inherent speckle problem; The need for reversible holographic materials; and finally the huge amount of data associated to holography. On the other extreme of solutions we find the auto-stereoscopic displays based on the codification of two views of the 3D scene in two entangled series of strips. This technique is optimum in the sense that the required amount of data is only twice larger than in classical 2D monitors. But it has two drawbacks: one is that it provides the observer with only one perspective, which can be viewed from only one position. The other is the convergence-accommodation conflict, which produces vision fatigue and sometimes headache

An attractive alternative to these techniques is the so-called Integral Photography (or Integral Imaging), which was initially proposed by Lippmann in 1908, and which resurrected about two decades ago due to the fast development of electronic matrix sensors and displays. The Lippmann idea was that one can record in a 2D matrix sensor many elemental images of a 3D scene, so that any elemental image stores the information of a different perspective of the object. When this info is projected onto a matrix display (like LCD or OLED) placed in front of an array of micro lenses, any pixel of the display generates a conical ray-bundle. And it is, precisely, the intersection of ray-bundles which produces the local concentration of light-density that permits the reconstruction of the scene. This reconstructed scene is perceived as 3D by the observer, whatever his/her position.

One of the problems of integral imaging systems when working in their standard configuration is that they provide images that are pseudoscopic; that is, are reversed in depth. To solve this problem we reported a technique for formation of real, undistorted, orthoscopic integral images by direct pickup. The technique is based on a Smart Mapping of PIxels (SPIM) of the elemental-images set.

More recently we have exploited the latent possibilities of the SPIM and have reported a much more general algorithm. The Smart Pseudoscopic to Orthoscopic Conversion (SPOC) allows the pseudoscopic to orthoscopic transformation with full control over the display parameters such us the pitch, the focal length and size of the microlenses, the depth position and size of the MLA, the depth position and size of the reconstructed images, and even the geometry of the MLA

Some of the main challenges faced by our group in order to improve the performance of InI technique are: to avoid the faceting, to improve the lateral resolution, to enhance the depth of field by prevention of the facet braiding and to enhance the viewing angle of InI monitors. The first problem (the faceting effect) results from the from vigneting occurred when the observer looks through the microlenses. What happens is that the observer sees only a small portion of the reconstructed scene through any microlens. The global effect is that the observer sees a kind a puzzle in which any piece is the facet seen through the corresponding microlens. Typical faceting effect occurs when the filling factor of the microlenses is smaller than one. In such case, any gap between microlenses inherently results in empty space between facets in the retinal image. We can then conclude that the use of amplitude modulation masks for changing the transmission properties of the microlenses, is highly non recommendable in the display stage on any InI implementation.

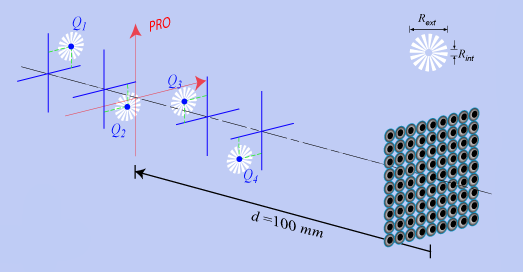

The lateral resolution of captured elemental images is mainly determined by sensor constraints and also by diffraction effects. To improve the lateral resolution of InI system in the capture stage, we have suggested the application of a hybrid technique that is based on the use of binary amplitude modulation during the capture process and Wiener filtering of the captured elemental images by computer processing. To illustrate the method, we have calculated the elemental images of four spoke targets for the pickup architecture shown down in the left In the center we show the reconstructed images obtained with the proposed hybrid method and in the right the images reconstructed with the conventional setup.

The main limitation in depth of field (DOF) in an InI device appears in the display stage due to the conflict between the imaging capacity of the microlenses and the ray-bundles intersection nature of InI reconstruction. As we see in the animation down, a point object within the reference plane (point A) produces a set of sharp elemental images on the sensor. Other points (B and C for example) produce blurred elemental images. In the reconstruction stage, the elemental images are reproduced on a matrix display placed in front of the microlenses. The conical ray-bundles generated by any pixel intersect constructively at the object positions giving rise to the 3D reconstruction. However, since the matrix display is still conjugate with the reference plane, all the conical bundles focus in such plane. When the observer looks through the microlenses, its focus is, naturally, adjusted to the reference plane, and therefore what it sees is a matrix of bright dots.

This effect is known as the facet braiding phenomenon, and imposes the most important limitation to the reconstruction of long depth 3D scenes. As shown in the down (a) animation, the detrimental consequences of the facet braiding are more apparent when observing continuous 3D scenes. In this experiment the reference plane was set at the optometrist-doll position. The braiding is really apparent in the windows and chimeny of the house. We have recently shown that braiding-free integral imaging is possible. To obtain this, is is necessary to adjust the display setup so that the matrix sensor is conjugate with the infinite. Proceeding in this form, some lateral resolution is sacrificed, but the quality of the reconstructed image is homogeneous (b).

(a)

(b)

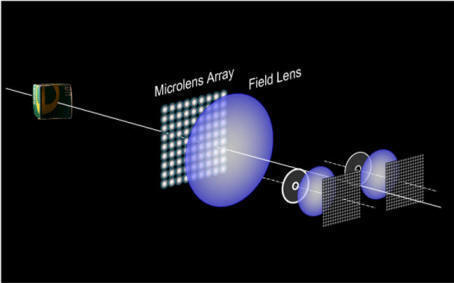

Other problem of InI realizations in the overlapping between elemental images when working with large scenes. To avoid this it is conventionally proposed the use of physical barriers between the elemental cells. An alternative solution, recently reported by our group, is the implementation of the barriers by purely optical means. In particular we suggested the use of Telecentric RElay Systems (TRES). Due to the telecentricity of the relay, the aperture stop is back-projected virtually onto the front focal plane of any microlens. Then, only rays passing through the projected micro pupils; therefore, emerging the microlens parallel to its optical axis, will reach the sensor.

The TRES concept can be used for multiple applications. One application is the enhancement of the viewing angle by of Integral Imaging monitors by use of Multiple Axis Telecentric RElay System (MATRES).

Since the TRES can be used for projection of any amplitude transmittance modulation for the microlenses, and therefore for parallel apodization of the lenses, one of the proposals is to project the transmittance of a lens of variable optical power to produce the micro-zoom array. This permits the fast, electronic focusing of objects located at different distances. For experimental verification, we inserted the a VARIOPTIC ARTIC-314 liquid lens at the aperture stop of a camera lens, and combined with a large-diameter lens in telecentric manner. With this setup we obtained a series of elemental-image sets in which the in-focus plane was tuned.

|